Earlier this week, Google launched its Gemini AI platform that ‘wowed’ the tech world. A video posted on YouTube showcased the new AI model’s capabilities to process and reason with text, images, audio, video, and code. However, it has since come to light that Google staged the hands-on video demonstration of Gemini AI.

This article was originally published by ZeroHedge.

Bloomberg reports that Google modified interactions with Gemini AI to create the demonstration video. The video is titled “Hands-on with Gemini: Interacting with Multimodal AI.”

Google admitted in the video’s description: “For the purposes of this demo, latency has been reduced, and Gemini outputs have been shortened for brevity.” In other words, the model’s response time takes much longer than the video showed.

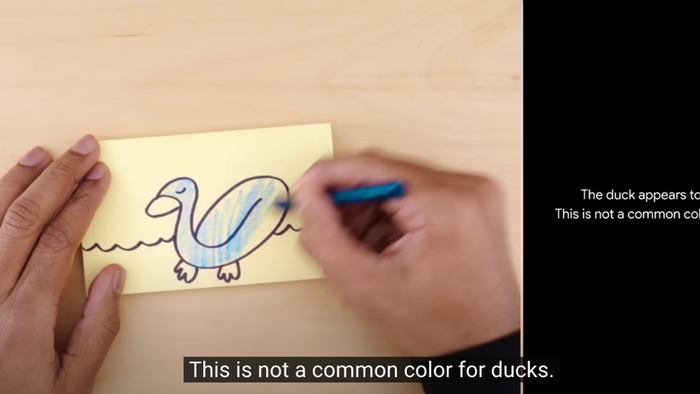

Gemini wasn’t responding to real-world prompts. According to Bloomberg, it was identifying what was being shown in still images:

In reality, the demo also wasn’t carried out in real time or in voice. When asked about the video by Bloomberg Opinion, a Google spokesperson said it was made by “using still image frames from the footage, and prompting via text,” and they pointed to a site showing how others could interact with Gemini with photos of their hands, or of drawings or other objects. In other words, the voice in the demo was reading out human-made prompts they’d made to Gemini, and showing them still images. That’s quite different from what Google seemed to be suggesting: that a person could have a smooth voice conversation with Gemini as it watched and responded in real-time to the world around it.

Google shares were pumped on the Gemini AI release video – only to be sold after reports of the fake video started hitting tech blogs overnight, with Bloomberg reporting Friday morning.

Parmy Olson for Bloomberg first reported there was something up with the video around 3 pm Thursday:

✨More from Google on THAT Gemini “duck” video:

“We created the demo by capturing footage in order to test Gemini’s capabilities on a wide range of challenges,” a spokesperson says. “Then we prompted Gemini using still image frames from the footage, & prompting via text.” (1/5) pic.twitter.com/zeia8gkX6u

— Parmy Olson (@parmy) December 7, 2023

A Google spokesperson said that “the user’s voiceover is all real excerpts from the actual prompts used to produce the Gemini output that follows.”

X users were not happy with Google’s video, indicating they felt misled by the tech giant:

Yesterday, Google shocked the world with its new AI, “Gemini.”

But it turns out the video was fake: the A.I. *cannot* do what Google showed.

It’s my opinion, as a lawyer and computer scientist, that (1) Google lied and (2) it broke the law. 🧵 pic.twitter.com/VDfvcwfQeO

— Clint Ehrlich (@ClintEhrlich) December 7, 2023

So do I understand correctly, this Gemini video was not realtime? So fake it till you make it? Again, American prototype-based scheme, just some video editing to drop some fascinating demo for demo purposes…

Multimodal AI isn’t a big deal anymore, for now, what could be a big…

— Anton Prokhorov (@hollandseuil) December 7, 2023

A lot of AI fraud this week. First the AI posing project and now the Google Gemini video. Probably seeing a slow down in AI breakthroughs and people don’t want to let go of the hype cycle

— Odell Blackmon III (@nodellodell) December 8, 2023

It’s insane how deceptive that video demo was. It was all cherry picked. They used static images with prompts and then made it appear gemini was analyzing the video in near real time. The whole video demo was a fraud.

Google giving repeat 2018 fake ai assistant demo vibes. https://t.co/ObhLxmKJBb

— Steven Moon (@stevenmoon) December 8, 2023

The bottom line is to maintain skepticism, especially towards claims made by tech companies, showboating their latest and greatest chatbots. This serves as an important reminder to be cautious about the AI bubble.